I am making a calculator, and want to make it get then bitwise NOT as a function. how could I do that?

here's the code:

#!/usr/bin/perl -w

print " this is a calculaitor that can preform multiplicaition, division, subtraction, addition, and can preform positive one digit exponents (to preform exponents put '^' in the operaition, val2 is the exponent value) \n";

print "val1: ";

my $inp1 = <STDIN>;

print "oper: ";

my $oper = <STDIN>;

print "val2: ";

my $inp2 = <STDIN>;

my $val1;

my $val2;

chomp($inp1, $inp2, );

if ($inp1 eq 'pi')

{ $val1 = 3.1415926535897932385;}

elsif ($inp1 eq 'e')

{ $val1 = 2.718281828459045;}

else

{ $val1 = $inp1;}

if ($inp2 eq 'pi')

{ $val2 = 3.1415926535897932385;}

elsif ($inp2 eq 'e')

{ $val2 = 2.718281828459045;}

else

{ $val2 = $inp2;}

chomp($val1, $oper, $val2,);

if ($oper eq '+')

{print $val1 + $val2, "\n";}

elsif ($oper eq '-')

{print $val1 - $val2, "\n";}

elsif ($oper eq '/')

{print $val1 / $val2, "\n";}

elsif ($oper eq '*')

{print $val1 * $val2, "\n";}

#tried to get it to take the NOT value, but the output was a ? instead of the NOT value

elsif ($oper eq '~')

{print ~$val1, "\n";}

elsif ($oper eq '^')

{print $val1 ** $val2, "\n";}

else

{print "Error, not an operaition this calculaitor can handle

\a";}

print "do another calculaition? (1 or 0)\n";

my $again = <STDIN>;

chomp($again,);

if ($again eq 1)

{exec($^X, $0, @ARGV);}

else

{exit;}

There are two things that every Perl developer knows:

- Parsing HTML with regular expressions is a bad idea.

- My situation is unique and exceptional, such that parsing HTML with regular expressions is ingenious and optimal.

Let's see the task. I think you know where this is going.

Task 2: Double Words

You are given a string (which may contain embedded newlines) which is taken from a page on a website. The string will not contain brackets [].

Write a script that will find doubled words (such as “this this”) and highlight (wrap in brackets) each doubled word.

The script should:

Work across lines, even finding situations where a word at the end of one line is repeated at the beginning of the next.

Find doubled words despite capitalization differences, such as with 'The the...', as well as allow differing amounts of whitespace (spaces, tabs, newlines, and the like) to lie between the words.

Find doubled words even when separated by HTML tags. For example, to make a word bold: '...it is <B>very</B> very important...'.

Only show lines containing doubled words.

Adapted from Mastering Regular Expressions, Third Edition, by Jeffrey E. F. Friedl

-

Example 1

-

Input:

$str = "you're given the job of checking the pages on a\nweb server for doubled words (such as 'this this'), a common problem\nwith documents subject to heavy editing." -

Output:

"web server for doubled words (such as '[this] [this]'), a common problem"

-

Input:

-

Example 2

-

Input:

$str = "Find doubled words despite capitalization differences, such as with 'The\nthe...', as well as allow differing amounts of whitespace (spaces,\ntabs, newlines, and the like) to lie between the words." -

Output:

"Find doubled words despite capitalization differences, such as with '[The]\n[the]...', as well as allow differing amounts of whitespace (spaces,"

-

Input:

-

Example 3

-

Input:

$str = "to make a word bold: '...it is <B>very</B> very important...'." -

Output:

"to make a word bold: '...it is <B>[very]</B> [very] important...'."

-

Input:

-

Example 4

-

Input:

$str = "Perl officially stands for Practical Extraction and Report Language, except when it doesn't." -

Output:

""

-

Input:

-

Example 5

-

Input:

$str = "There's more than one one way to do it.\nEasy things should be easy and hard things should be possible." -

Output:

"There's more than [one] [one] way to do it."

-

Input:

So it begins

The reference to Mastering Regular Expressions seems like a pretty strong hint. Back when I was doing HTML and XML processing, I always favored using a module to parse tagged text (XML::Twig was a particular favorite), but let's take the hint.

A sort of obvious thing to do here is to split the string into words, but it's going to be annoying because of the possibility of HTML tags. And once we break the string up, we'll lose the space and tags that we need to retain for the requested output.

"The string will not contain brackets" is an odd thing to say. But it turns out to be useful, because we only want to print output lines that do contain brackets, so this little clause is actually doing us a favor.

An outline

Let's assume that we can conjure up a regular expression to use with split to extract the words; let's call it $sepRE. We can then compare consecutive pairs of words to find duplication. That solves half the problem, but loses the context in the original string. We need to reproduce the original text, plus highlighting brackets, including all the stuff between words.

Because we have weird habits, we have (more than once) read through all the documentation of split, and so we know that there is a variation in which the function can return both the desired substrings and the separators. To wit, if we enclose the regular expression in parentheses, split will return all the pieces of the string, alternating between separators and substrings. The first piece we need looks like:

my @word = split( /($sepRE)/, $str);

Now the words will be in either the even or odd elements of @word, depending on whether the string started with a separator. We can check for duplicates by skipping along in pairs.

# Take every other word. Skip over a leading separator.

my ($first, $second) = ( $word[0] =~ m/$sepRE/ )

? (1,3) : (0, 2);

while ( $second <= $#word)

{

my (@w) = ( $word[$first], $word[$second]);

if ( $w[0] eq $w[1] )

{

$word[$first] =~ s/($w[0])/[$1]/;

$word[$second] =~ s/($w[1])/[$1]/;

}

$first += 2; $second += 2;

}

That marks up the duplicate words, but only in the @word array. We need to face the problem of getting the desired output. To get there, put the @word array back into a single string, and then grep for the lines that contain our newly-inserted brackets.

# Reassemble the string, now with some words possibly bracketed

my $highlighted = join("", @word);

# Output includes only lines where we inserted brackets

return join "\n",

grep /[\[\]]/,

split(/\n/, $highlighted);

A few minor details

Case insensitivity. In our check for duplicates, we need to ignore case differences, so let's compare the lowercase versions of the words:

if ( lc($w[0]) eq lc($w[1]) )Punctuation. As shown in Example 2, punctuation surrounding the words should be ignored. Let's whip up a quick function to trim leading and trailing punctuation (the

[:punct:]character class is handy for this), and use it before we compare

sub trimPunct($word)

{

$word =~ s/^[[:punct:]]+//;

$word =~ s/[[:punct:]]+$//;

return $word

}

. . .

my (@w) = ( trimPunct($word[$first]),

trimPunct($word[$second]));

About that regular expression

Time to take care of that regular expression that acts as the word separator. With some experimentation (the regex101 web site is good for that), we arrived at this:

state $sepRE =

qr/ \s* # Possible white space followed by

(?: # ( a group of a tag and more white space

<[^>]*> # ... an HTML tag

\s* # ... optional space

)+ # ) there can be multiple tags

| \s+ # Or just white space

/x;

Not too bad. Didn't even require look-around assertions. Some notes:

-

state $sepRE = qr/.../-- This is a constant regular expression, so we can usestateto only evaluate it once. -

qr//x-- Thexflag allows us to add formatting and comments to the expression -

(?:...)-- We want to group, but we don't need the overhead of capturing, so(?:)is a non-capturing group. -

complicated | \s+-- Why is the complicated part first? Because the alternatives are evaluated left to right. If\s+eats up the white space, the expression matches and wouldn't proceed to trying tags.

Okay, here's the whole thing.

sub task($str)

{

state $sepRE = qr/ \s* # Possible white space followed by

(?: # ( a group of a tag and more white space

<[^>]*> # ... an HTML tag

\s* # ... optional space

)+ # ) There can be multiple tags

| \s+ # Or just white space

/x;

# Using () in RE includes separators in the array

my @word = split( /($sepRE)/, $str);

# Take every other word. Skip over a leading separator.

my ($first, $second) = ( $word[0] =~ m/$sepRE/ ) ? (1,3) : (0, 2);

while ( $second <= $#word)

{

my (@w) = ( trimPunct($word[$first]), trimPunct($word[$second]));

if ( lc($w[0]) eq lc($w[1]) )

{

$word[$first] =~ s/($w[0])/[$1]/;

$word[$second] =~ s/($w[1])/[$1]/;

}

$first += 2; $second += 2;

}

# Reassemble the string, now with some words possibly bracketed

my $highlighted = join("", @word);

# Output includes only lines where we inserted brackets

return join"\n", grep /[\[\]]/, split(/\n/, $highlighted);

}

And the music for this week's challenge was Double Vision by Foreigner.

While the programming languages with the current market share are loud and about on the web, where can one find the perl community these days outside of reddit ? Is the majority of the community still on IRC ? Or is it dispersed across different platforms

[link] [comments]

RMG: separate email preparation from version bump - make the perlpolicy update more prominent by adding a specific section header - clarify the PERL_API constants instructions and make them release-specific - move the end-of-support email instructions to the email section Co-authored-by: Eric Herman <eric@freesa.org>

clarify the different cases of "bump the version number" The version number must be bump in separate occasions: - when releasing a BLEAD-FINAL (or anything besides a BLEAD-POINT), bump the version at the beginning of the release process - after releasing a BLEAD-POINT, bump the version to prepare for the next dev release (even it the next release is likely to be an RC or BLEAD-FINAL) - after releasing a BLEAD-FINAL, bump the version to the new .0 for the new dev cycle

add 5.NEXT placeholder to the RMG Co-authored-by: Eric Herman <eric@freesa.org>

Someone on Reddit asked how you can maintain your own version of application while also being able to upgrade packages without getting conflicts between your changes and Debians changes.

Answer

This is normal in Debian life. Debian often solves this via three ways.

- .d config directories.

- Ordering

- dpkg-divert

Sudo, apache, nginx, and lightdm, all support the first way. Some packages like

unattended-upgrades use the 2nd way, ordering and some like minidnla only

allow for the third way.

LightDM might be a bit different, it follows order twice.

(A quick disclaimer before we begin: this session took place nearly 30 years ago. While the core structural concepts and my definitive…

Update Module::CoreList

Update after Module::CoreList release to CPAN

This week we were back to full strength. We have now dealt with all of the belated issues and all of the blockers. Paul will be shipping 5.43.11 very shortly. With the amount of changes we have had to merge, we will not be able to rush the .11 cycle, but we intend to start the work on the 5.44 RC early, to ensure that we can release with as little additional delay as possible.

The call to cond_signal is incorrect. It should be cond_broadcast.

This is why Thread::Queue is unreliable at cleanup.

[link] [comments]

Originally published at Perl Weekly 775

Hi there!

I try to keep track of the Perl-related events. You can find them listed at the bottom of each edition of the newsletter and on the events page on the Perl Weekly web site. There you can also find a link to embed the calendar in your calendar program. There are a number of events scheduled for this month. Most of them online, so if your time-zone permits, you can join those events. The big in-person event is at the end of the month The Perl and Raku Conference in Greenville, South Carolina, USA.

In the last couple of weeks I have been using various AI tools extensively. It still needs some hand-holding, but it already writes code that seems to be way better than the average code I've seen. So I wonder, would it be possible to ask one of the AI tools to convert Python libraries to Perl? You know, we have been complaining for many years that companies provide implementation for their SDK/API/client in several language, but not in Perl. We also saw that CPAN could not keep up with the growth of PyPI, npm and the other 3rd party library registries. So maybe some of you would like to explore the idea of converting some of these libraries to Perl using AI.

Finally a personal note, I am planning a trip to Korea and Japan in September-October. If you live there and would have any travel recommendations, I'd love to get that.

Enjoy your week!

--

Your editor: Gabor Szabo.

Articles

Introducing ZuzuScript

Toby Inkster created a programming language which blends a fairly JavaScript-like syntax with fairly Perl-like semantics, and a few other features that he hasn't really seen in many programming languages.

ANNOUNCE: Perl.Wiki V 1.47, JSTree copy V 1.21

Teaching AI About the British Monarchy with MCP

The site already exposes information through a traditional web interface and a JSON API. But those interfaces were designed for humans and developers respectively. MCP gives AI systems a much cleaner integration point.

ANNOUNCE: Perl.Wiki V 1.46 & other news

TPRC Schedule Posted - Time to Submit Lightning Talks

Discussion

Dist::Zilla::PluginBundle::Starter: Use Readme::Brief instead of Pod2Readme?

Perl eval question

Oh, the evil eval.

Perl

This week in PSC (226) | 2026-05-25

The Weekly Challenge

The Weekly Challenge by Mohammad Sajid Anwar will help you step out of your comfort-zone. You can even win prize money of $50 by participating in the weekly challenge. We pick one champion at the end of the month from among all of the contributors during the month, thanks to the sponsor Marc Perry.

The Weekly Challenge - 376

Welcome to a new week with a couple of fun tasks "Chessboard Squares" and "Doubled Words". If you are new to the weekly challenge then why not join us and have fun every week. For more information, please read the FAQ.

RECAP - The Weekly Challenge - 375

Enjoy a quick recap of last week's contributions by Team PWC dealing with the "Single Common Word" and "Find K-Beauty" tasks in Perl and Raku. You will find plenty of solutions to keep you busy.

Common Beauty

This is an excellent example of how to use Raku's idiomatic style to perform frequency analysis with a very elegant way of using Bag data type and to make use of the %% operator for nice clean checks of divisibility by using Raku's built in primitives instead of having to write complex manual logic.

Perl Weekly Challenge: Week 375

It provides an excellent, straightforward exhibition on how to solve the problems associated with Weekly Challenge 375 in Raku and Perl. In addition to showing off some of the more sophisticated, expressive data types that Raku supports (i.e., Bag) Jaldhar shows how they were able to implement clever, easy-to-read workarounds in Perl to accomplish essentially the same thing.

Common Beauty

This blog post does a great job of examining and comparing the practical solutions using Perl and an advanced version of programming with the J language. The way Jorg has generalised the different types of problems he presented creates a sophisticated solution for someone that will accept any number of bases and/or arrays as inputs, and produces results that would be considered to be of a high standard.

Perl Weekly Challenge 375

This post shows a clear-cut way to address a problem and demonstrate a solid grasp of Perl's function-based capabilities. It uses expressive one-line Perl statements to demonstrate how small, readable, and consistent code can be written to transform data.

A Single Beauty

This is an exemplary work featuring high-quality code that has a high degree of maintainability, uses excellent error check techniques and provides high levels of readability. In addition to using excellent reading technique, his solutions include well-documented logic and complete test suites.

You’ve got to get up every morning…

Packy has created an outstanding multi-language deep dive post comparing different implementations of Raku, Perl, Python, and Elixir. In addition to providing many details about each language's implementation, his approach is to focus on making it easy to read and easy to follow the logic behind each one in spite of their technical complexities. He shows us how we can take the same data structure using different paradigms to create different implementation solutions for the same problem.

Single and beautiful

This solution exemplifies how effective algorithms can be designed, using math formulas as a way of running multiple sets of frequencies over a single array. It’s an example of a very concise method of solving complex state-tracking issues, using the least amount of code as possible while maintaining a high degree of readability.

The Weekly Challenge - 375: Single Common Word

A clear and systematically organised method for resolving this issue is provided within this post, as well as a very easy to read content style, making it simple to see the reasoning behind the solution. Among other things, this post displays a good presentation of example test cases and has a good way of providing technical explanations to both new to experienced software programmers alike.

The Weekly Challenge - 375: Find K-Beauty

This blog post exemplifies well-designed code that solves the "Find K-Beauty" problem in a strong manner. Reinier does an excellent job of providing clear, modular logic and using the least amount of code to implement those solutions. This allows the reader to see how the sliding window method works to find divisors in the number's format.

The Weekly Challenge #375

This post presents an incredibly complete and conversational analysis of the obstacles, illustrated with sophisticated technical knowledge through a thoroughly well-organised, educational format. Robbie's skill at blending solid, production-level Perl code with detailed explanation of the rationale behind it provides readers with an excellent foundation for either becoming familiarized with this form of problem solving or gaining greater depth of understanding regarding the "how" and "why" of idiomatic Perl.

Uncommon Beauty

Roger produced a very thorough, well-organised explanation of the problem, using techniques such as hash frequencies and sliding windows to create solutions that are both clean and accurate. By comparing the two different languages Perl and Scala, Roger shows that they clearly understand how to approach solving problems using multiple programming languages.

The Common Beauty

This is an excellent example of a bilingual coding approach. It contrasts high-level data structures in Python with Perl's more flexible but lower-level data manipulation. Simon offers a clear explanation of the two different languages used to explain how one would move algorithmic logic from one programming paradigm to another by providing step-by-step instructions and side-by-side examples.

Videos

JSON Schema at the Toronto Perl Mongers

Weekly collections

NICEPERL's lists

Great CPAN modules released last week.

Events

Exploring Perl Modules + using AI (online)

June 3, 2026

Boston Perl Mongers virtual monthly

June 9, 2026

Paris.pm monthly meeting

June 10, 2026

Purdue Perl Mongers (HackLafayette) - TBA

June 11, 2026

Berlin.pm - Naumanns Biergarten

June 24, 2026

Toronto.pm - June Social Evening

June 25, 2026

The Perl and Raku Conference 2026

June 26-29, 2026, Greenville, SC, USA

You joined the Perl Weekly to get weekly e-mails about the Perl programming language and related topics.

Want to see more? See the archives of all the issues.

Not yet subscribed to the newsletter? Join us free of charge!

(C) Copyright Gabor Szabo

The articles are copyright the respective authors.

So I've created a programming language which blends a fairly JavaScript-like syntax with fairly Perl-like semantics, and a few other features that I haven't really seen in many programming languages.

This Perl:

use Path::Tiny;

my $str = uc(substr(Path::Tiny->new("myfile.txt")->slurp_utf8, 0, 80));

Becomes this in ZuzuScript:

from std/path import Path;

let str := new Path("myfile.txt")

▷ ^^.slurp_utf8

▷ ^^[0:80]

▷ uc ^^;

The ▷ operator means "evaluate the left side, then evaluate the right side with ^^ set to the result of the left side". It's conceptually similar to | in shells and seems to make a lot of expressions so much easier to understand.

Other features I like:

Path/query operators for XPath/JSONPath-like deep access to nested objects.

Built-in

async/await.OO including roles/traits.

Runs in the browser!

PairLists (like hashes, but with ordered keys that allow duplicate keys)

for/else

Familiar things from Perl: documentation uses pod, variables are lexical (actually almost everything is lexical), there's a topic variable (but it's called ^^ instead of $_), different operators for different data types (> for numbers but gt for strings), weak typing, keywords like say and warn, first class regexps, and a CPAN-like site for sharing modules.

The primary implementation is in Perl, but there are alternative implementations in Rust and JavaScript. Yes, this is coded with AI assistance.

More info: https://zuzulang.org/.

In Perl, is there an nsort function (to sort numerically) available in a mainstream CPAN module?

There is nsort_by in both List::MoreUtils and List::UtilsBy, but no nsort. Sort::Naturally has nsort, but I don't want a “natural” sort, but a plain numeric one.

Sure, I can do sort { $a <=> $b } but I'd like to do it in a cleaner way.

Both are available from my Wiki Haven.

Next step will be the validation module for CPAN::MetaCurator, using the new:

use feature 'class'

code.

After that, back to the re-write of all *.pm in CPAN::MetaCurator.

| submitted by /u/davorg [link] [comments] |

-

App::cpm - a fast CPAN module installer

- Version: v1.1.1 on 2026-05-24, with 178 votes

- Previous CPAN version: v1.1.0 was released 16 days before

- Author: SKAJI

-

App::DBBrowser - Browse SQLite/MySQL/PostgreSQL databases and their tables interactively.

- Version: 2.442 on 2026-05-22, with 18 votes

- Previous CPAN version: 2.441 was released 7 days before

- Author: KUERBIS

-

App::Netdisco - An open source web-based network management tool.

- Version: 2.099004 on 2026-05-29, with 873 votes

- Previous CPAN version: 2.099003 was released 1 day before

- Author: OLIVER

-

Archive::Tar - Manipulates TAR archives

- Version: 3.10 on 2026-05-25, with 16 votes

- Previous CPAN version: 3.08 was released 3 days before

- Author: BINGOS

-

Cpanel::JSON::XS - cPanel fork of JSON::XS, fast and correct serializing

- Version: 4.41 on 2026-05-27, with 47 votes

- Previous CPAN version: 4.40 was released 8 months, 19 days before

- Author: RURBAN

-

CPANSA::DB - the CPAN Security Advisory data as a Perl data structure, mostly for CPAN::Audit

- Version: 20260524.001 on 2026-05-24, with 25 votes

- Previous CPAN version: 20260517.001 was released 7 days before

- Author: BRIANDFOY

-

DateTime::Format::Natural - Parse informal natural language date/time strings

- Version: 1.26 on 2026-05-28, with 19 votes

- Previous CPAN version: 1.25_04 was released 1 day before

- Author: SCHUBIGER

-

Minion::Backend::SQLite - SQLite backend for Minion job queue

- Version: v6.0.0 on 2026-05-26, with 14 votes

- Previous CPAN version: v5.0.7 was released 3 years, 9 months, 12 days before

- Author: DBOOK

-

Mojo::SQLite - A tiny Mojolicious wrapper for SQLite

- Version: v4.0.0 on 2026-05-25, with 28 votes

- Previous CPAN version: 3.009 was released 4 years, 2 months, 2 days before

- Author: DBOOK

-

SPVM - The SPVM Language

- Version: 0.990179 on 2026-05-29, with 36 votes

- Previous CPAN version: 0.990178 was released the same day

- Author: KIMOTO

-

Template::Toolkit - comprehensive template processing system

- Version: 3.106 on 2026-05-25, with 149 votes

- Previous CPAN version: 3.105 was released the same day

- Author: TODDR

-

Test::MockModule - Override subroutines in a module for unit testing

- Version: v0.185.2 on 2026-05-29, with 18 votes

- Previous CPAN version: v0.185.1 was released 2 days before

- Author: GFRANKS

-

YAML::Syck - Fast, lightweight YAML loader and dumper

- Version: 1.46 on 2026-05-25, with 18 votes

- Previous CPAN version: 1.45 was released 1 month, 1 day before

- Author: TODDR

One of the more interesting additions I’ve made recently to the Line of Succession website is support for the Model Context Protocol (MCP).

If you’ve spent any time around AI tooling recently, you’ve probably seen people talking about MCP. It’s often described as “USB for AI”, which is perhaps a little overblown, but the basic idea is sound. MCP provides a standard way for AI assistants to discover and use external tools and data sources.

In practical terms, it means that instead of building bespoke integrations for ChatGPT, Claude, Gemini and whatever comes next, you expose a standard MCP endpoint and let the AI clients do the rest.

For a data-driven site like Line of Succession, that seemed like an obvious experiment.

What is MCP?

The Model Context Protocol was originally developed by Anthropic and has rapidly become one of the emerging standards in the AI ecosystem.

An MCP server exposes:

- Information about itself

- A list of available tools

- Schemas describing how those tools should be called

- The results returned by those tools

An AI client can connect to the server, discover the available tools and invoke them when needed.

Instead of scraping web pages or attempting to infer information from HTML, the AI gets access to structured data.

That’s exactly the kind of thing Line of Succession is good at.

Why Add MCP?

The site already exposes information through a traditional web interface and a JSON API.

But those interfaces were designed for humans and developers respectively.

MCP gives AI systems a much cleaner integration point.

For example, an AI assistant can now answer questions like:

- Who was the British sovereign on 14 November 1948?

- What did the line of succession look like in 1980?

- Who was next in line when Queen Victoria died?

without having to scrape pages or understand the site’s internal URLs.

More importantly, it ensures that the information comes directly from the same database that powers the website.

The AI isn’t guessing.

It’s querying the source of truth.

As someone who runs a reference website, that’s a pretty attractive proposition.

The Initial Design

My first goal was to keep things simple.

Rather than exposing dozens of narrowly-focused tools, I started with just two:

sovereign_on_dateline_of_succession

Those two tools cover a surprisingly large proportion of the questions people are likely to ask.

The first returns the sovereign reigning on a given date. The second returns the line of succession for a specified date, with a configurable limit on the number of entries returned.

The implementation currently caps the list at thirty people. That’s enough for most use cases while preventing someone from accidentally asking for all six thousand people currently in the line of succession.

One thing I learned quite quickly is that MCP isn’t really about exposing huge amounts of data. It’s about exposing useful questions that can be answered from your data.

MCP Is Mostly JSON-RPC

One thing that surprised me when I first started reading the specification was how little protocol code is actually required.

At its core, MCP uses JSON-RPC.

A client sends requests like:

{

"jsonrpc": "2.0",

"id": 1,

"method": "tools/list"

}

and the server responds with:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

...

}

}

Once I’d written helper methods for creating standard JSON-RPC responses, most of the complexity disappeared.

The MCP module contains methods like:

sub rpc_result ($self, $id, $result)

and:

sub rpc_error ($self, $id, $code, $message)

which means the Dancer route handlers remain pleasantly small.

The protocol logic lives in one place and the web application simply delegates to it.

Separating the MCP Logic

I didn’t want protocol-specific code scattered throughout the web application.

Instead, I created a dedicated module:

package Succession::MCP;

This module is responsible for:

- Initialisation

- Tool discovery

- Tool execution

- JSON-RPC response generation

- Error handling

That keeps the Dancer routes thin and makes the MCP implementation easier to test independently.

It also means that if I ever decide to expose the same MCP server through a different transport mechanism, most of the work is already done.

Tool Calls Are Mostly Adapters

One pleasant surprise was how little new application logic I actually had to write.

The MCP server needs to expose tools, but those tools ultimately just answer questions about the succession database. The code to answer those questions already existed.

For example, the application’s model layer already contained methods such as:

sovereign_on_date()line_of_succession()

These methods power parts of the website itself, so they already encapsulate all of the business rules and database queries.

The MCP implementation simply acts as an adapter.

When a tool call arrives, the server extracts the arguments, validates them and passes them to the existing model methods:

sub _call_tool ($self, $tool_name, $args) {

my $tool = $self->_tool_dispatch->{$tool_name};

return $tool->($args);

}

The tool implementations themselves are deliberately thin:

sub sovereign_on_date ($self, $args) {

my $date = $args->{date};

my $sovereign = $self->model->sovereign_on_date($date);

...

}

That’s exactly how I wanted it to work.

The MCP layer doesn’t know how to calculate a line of succession or determine who was sovereign on a particular date. It simply knows how to expose those capabilities through the protocol.

This is one of the advantages of adding MCP to an existing application. If your business logic is already cleanly separated from your web interface, an MCP server often becomes surprisingly straightforward to implement.

In many ways, adding MCP feels less like building a new application and more like adding another interface alongside the website and API.

The YAML Epiphany

The most interesting design decision came a little later.

Initially, the tool definitions lived in Perl data structures.

That worked, but it quickly became obvious that I was duplicating information.

The MCP server needed tool descriptions.

The documentation page needed tool descriptions.

The schemas needed to be defined somewhere.

And every change required updating multiple places.

The obvious answer was to move all of the tool definitions into a YAML file.

The MCP module now loads its tool definitions at startup:

sub _build__tools ($self) { return LoadFile($self->tools_file); }

The result is a single source of truth.

The same YAML file drives:

- The

tools/listresponse - Tool metadata

- JSON schemas

- Human-readable documentation

Adding a new tool now involves updating one file and writing the code that implements it.

Everything else follows automatically.

Here’s the current YAML file:

# data/mcp-tools.yml

- name: sovereign_on_date

description: Return the British sovereign on a given date.

documentation: |

Looks up the reigning British sovereign for the supplied date.

Use this when answering questions such as “Who was sovereign on

6 February 1952?”

inputSchema:

type: object

properties:

date:

type: string

description: Date in YYYY-MM-DD format.

required:

- date

- name: line_of_succession

description: Return the line of succession on a given date.

documentation: |

Returns people in the line of succession.

If no date is supplied, the current line of succession is returned.

inputSchema:

type: object

properties:

date:

type: string

description: Optional date in YYYY-MM-DD format. Omit for the current line of succession.

limit:

type: integer

description: Maximum number of successors to return.

minimum: 1

maximum: 100

required: []

Looking back, this is probably the part of the design I’m happiest with. It feels very Perl-ish: keep configuration as data and avoid duplicating information wherever possible.

Human Documentation Matters

One thing I noticed while exploring other MCP servers is that many of them are effectively invisible to humans.

You know an endpoint exists.

You know it speaks MCP.

But unless you inspect the protocol responses manually, you don’t really know what it does.

I decided to add a conventional web page at /mcp.

The page lists all available tools, their descriptions and their schemas.

The nice part is that there is no duplicated documentation.

The page is generated from the same YAML definitions used by the MCP server itself.

If I add a new tool tomorrow, both the machine-readable and human-readable views update automatically.

Structured Data and Text Responses

Another nice feature of MCP is that tool results can include both structured data and human-readable text.

For example, a tool response might contain:

{

"content": [ {

"type": "text",

"text": "The sovereign on 14 November 1948 was George VI."

} ],

"structuredContent": {

...

}

}

The structured content is useful for software.

The text is useful for humans and language models.

Both are generated from the same underlying data.

That gives AI clients flexibility while ensuring consistency.

Getting Listed

Once everything was working, I submitted the server to the MCP directory at mcpservers.org.

That might seem like a small step, but discoverability is important.

An MCP server hidden on a random website isn’t much use if nobody knows it exists.

Directories like that are rapidly becoming the equivalent of API catalogues for the AI era.

Being listed means developers and AI enthusiasts can find the service without first discovering the website.

Was It Worth It?

Absolutely.

The amount of code required was surprisingly small. Most of the work wasn’t implementing the protocol; it was deciding how best to expose the data.

More importantly, it opens the site up to an entirely new audience: AI agents.

Historically, websites were built for humans and APIs were built for developers.

MCP introduces a third category: services designed specifically for AI systems.

For a structured-data site like Line of Succession, that’s a natural fit.

Will MCP still be the dominant standard in five years’ time? I have no idea. The AI industry changes too quickly to make confident predictions.

But right now it has significant momentum, broad industry support and a growing ecosystem of tools.

And if nothing else, it’s rather satisfying to ask an AI who was on the throne on a particular date and know that the answer came directly from my database rather than from whatever the model happened to remember.

The post Teaching AI About the British Monarchy with MCP first appeared on Perl Hacks.

Problem is Bad arg length for Socket::unpack_sockaddr_in, see below.

repro

file /tmp/demo/app.psgi

use 5.042;

use strictures;

use Plack::Request qw();

use experimental 'signatures';

my $app = sub($env) {

my $req = Plack::Request->new($env);

return $req->new_response(200, ['Content-Type' => 'text/plain'], ['Hello world'])->finalize;

};

file ~/.config/systemd/user/demo.service, replace … with absolute path to plackup

[Unit]

Description=Socket-activated hello world service

Requires=demo.socket

After=network.target

[Service]

ExecStart=…perl/5.42.2/bin/plackup -a /tmp/demo/app.psgi

Type=simple

file ~/.config/systemd/user/demo.socket

[Unit]

Description=Socket for hello world service activation

PartOf=demo.service

[Socket]

ListenStream=5000

NoDelay=true

Backlog=128

[Install]

WantedBy=sockets.target

patch Plack-1.0054

diff --git a/lib/HTTP/Server/PSGI.pm b/lib/HTTP/Server/PSGI.pm

index 225d4ca..f060463 100644

--- a/lib/HTTP/Server/PSGI.pm

+++ b/lib/HTTP/Server/PSGI.pm

@@ -90,6 +90,12 @@ sub prepare_socket_class {

sub setup_listener {

my $self = shift;

+ # TO DO: also handle LISTEN_PID LISTEN_PIDFDID

+ if ($ENV{LISTEN_FDS} && 1 == $ENV{LISTEN_FDS}) {

+ $self->{listen_sock} ||= IO::Socket::INET->new_from_fd(3, 'r+');

+ goto DONE;

+ }

+

$self->{listen_sock} ||= do {

my %args = (

Listen => SOMAXCONN,

@@ -104,6 +110,7 @@ sub setup_listener {

or die "failed to listen to port $self->{port}: $!";

};

+DONE:

$self->{server_ready}->({ %$self, proto => $self->{ssl} ? 'https' : 'http' });

}

- apply patch, install patched Plack where

app.psgican use it - make port 5000 available (perhaps is a Plack app already occupying?)

- run

systemctl --user daemon-reload - run

systemctl --user enable --now demo.socket

observed problem

Run xh -v :5000 or use any other HTTP client to GET http://localhost:5000, no response.

journalctl --user -e -u demo -f shows the error:

systemd: Started Socket-activated hello world service.

plackup: HTTP::Server::PSGI: Accepting connections at http://0:5000/

plackup: Bad arg length for Socket::unpack_sockaddr_in, length is 28, should be 16 at …perl/5.42.2/lib/5.42.2/x86_64-linux-ld/Socket.pm line 858.

systemd: demo.service: Main process exited, code=exited, status=29/n/a

systemd: demo.service: Failed with result 'exit-code'.

To start over: systemctl --user stop demo.service ; systemctl --user restart demo.socket

expected result

HTTP request finishes

relevant

in dist IO: new_from_fd documentation, new_from_fd test

to clean up the system

systemctl --user disable --now demo.socket

rm ~/.config/systemd/user/demo.{service,socket} /tmp/demo/app.psgi

# rm -rf …Plack-1.055

systemctl --user daemon-reload

The new Perl.Wiki.html V 1.46 & the JSTree version V 1.20 are available from my

Wiki Haven.

Other news:

(a) CPAN::MetaCurator:

Status: On github & on CPAN.

There are some issues:

1. It is becoming increasingly awkward to process the records read from the database

in order to transform them into JSTree stuff, so I am going to re-write the code to firstly

read the db & store the data in a Tree::DAG_Node. That way the data more nearly

matches the JSTree structure so the common cases & all the special cases (FAQ,

See Also, pre-formatted paras, etc) are more easily processed.

2. I use Moo in this module as I do so often but in CPAN::MetaCurator::Export I must

have done something weird because sub BUILD is not called & I can't over-ride various

defaults by passing options to new(). Solution: Re-write whole module using Perl's new

feature 'class', thereby teaching myself how to do that & hopefully fixing the over-ride

problem. This new code is temporarily called CPAN::MetaXPAN. It's on github but is

rudimentary at best. Avert your eyes.

Back to CPAN::MetaCurator...

3. It contains a module, CPAN::MetaCurator::Search, which reads a file of module names

& reports whether or not they are in Perl.Wiki.html. It is part of the future automation of the

process of adding modules to Perl.Wiki.html.

4. The next extension will be CPAN::MetaCurator::Validate, which will solely validate the

format of tiddlers.json as exported from Perl.Wiki.html, just looking for typos.

(b) CPAN::MetaPackager:

Status: On github & on CPAN.

Do nothing.

(c) CPAN::MetaBuiltin:

Status: Nothing in this module has been written yet, pending the outcome of the above

feature 'class' experiment. I used perlbrew to install 2 versions of Perl, so then I can scan

those & put the list of modules shipped with each Perl into a single SQLite db. Then that

db can be read by CPAN::MetaCurator & that will enable me to annotate the modules

listed in the JS tree with a flag indicating the module ships with some versions of Perl. It is

currently /not/ my intention to list all versions of Perl the modules ship with.

(d) CPAN::MetaDeprecated:

Status: Nothing in this module has been written yet, pending the outcome of the above

feature 'class' experiment.

I plan to do with deprecated modules exactly as I've just outlined with builtin modules.

Hold your collective breaths. Upon turning blue cease breathing/holding your breath/whatever.

(e) Retirement plans. Part of the reason for spelling all this out is that next month,

June 2026, I will be 76 (much to my amazement & horror :-) & am hoping someone will

one day take an interest in adopting these modules after I stop coding.

Further, I'm currently waiting for a time to be announced for an 'emergency' surgery due

to bleeding in the bowel. It's almost certainly colonic polyps, a few of which I had removed

9 years ago. So the beast is known, but still not pleasant. If I survive the op I will report

back :-).

TPRC hotel pricing and early-bird pricing end at midnight (Eastern Time) on May 28th. If you haven’t registered and reserved your hotel room, today is the day!

The conference schedule is now published. We have a lineup of great speakers! Check it out at https://tprc.us/tprc-2026-gsp/schedule-2/ .

We are also now accepting submissions for Lightning Talks, which are talks of no more than 5 minutes. They are a great way to participate, and make a contribution to the community! See https://tprc.us/tprc-2026-gsp/lightning-talks/ to submit a talk or to learn more about them.

Did you know that this conference is the Namer for Lightning Talks? It is true, way back in 2000, and we are proud to have an unbroken streak of Gongs ever since! See https://en.wikipedia.org/wiki/Lightning_talk , and https://www.youtube.com/@YAPCNA/search?query=lightning%20talk .

Paul is away this week so only Leon and Aristotle were present. A number of the belated-for-this-cycle issues have been addressed this week and we are following up on the remaining ones. We do not yet have a firm commitment for the release manager for 5.43.11.

Weekly Challenge 375

Each week Mohammad S. Anwar sends out The Weekly Challenge, a chance for all of us to come up with solutions to two weekly tasks. My solutions are written in Python first, and then converted to Perl. Unless otherwise stated, Copilot (and other AI tools) have NOT been used to generate the solution. It's a great way for us all to practice some coding.

Task 1: Single Common Word

Task

You are given two array of strings.

Write a script to return the number of strings that appear exactly once in each of the two given arrays. String comparison is case sensitive.

My solution

For input from the command, I take two space separated strings to generate the two arrays.

For this task, I start by counting the frequency of words in each list (array in Perl).

from collections import Counter

def single_common_word(array1: list[str], array2: list[str]) -> int:

freq1 = Counter(array1)

freq2 = Counter(array2)

I then create two sets of words which only appear once in each list.

unique1 = {word for word in freq1 if freq1[word] == 1}

unique2 = {word for word in freq2 if freq2[word] == 1}

Finally, I return the length of the intersection of both sets.

return len(unique1 & unique2)

The Perl solution follows a similar logic. Using the scalar function will return the number of items in the array.

use Array::Utils 'intersect';

sub main ( $str1, $str2 ) {

my @array1 = split /\s+/, $str1;

my @array2 = split /\s+/, $str2;

my ( %freq1, %freq2 );

$freq1{$_}++ foreach @array1;

$freq2{$_}++ foreach @array2;

my @unique1 = grep { $freq1{$_} == 1 } keys %freq1;

my @unique2 = grep { $freq2{$_} == 1 } keys %freq2;

say scalar( intersect( @unique1, @unique2 ) );

}

Examples

$ ./ch-1.py "apple banana cherry" "banana cherry date"

2

$ ./ch-1.py "a ab abc" "a a ab abc"

2

$ ./ch-1.py "orange lemon" "grape melon"

0

$ ./ch-1.py "test test demo" "test demo demo"

0

$ ./ch-1.py "Hello world" "hello world"

1

Task 2: Find K-Beauty

Task

You are given a number and a digit k.

Write a script to find the K-Beauty of the given number. The K-Beauty of an integer number is defined as the number of substrings of given number when it is read as a string has length of k and is a divisor of given number.

My solution

This is relatively straight forward. Python treats integers and strings as different types (and methods that can be called). The first thing I do is convert num to a string called s.

def k_beauty(num: int, k: int) -> int:

s = str(num)

I then generate all possible substrings of length k and check if it is evenly divisible from the original number. If it is I increment the count variable. At the end, I return the final count value.

count = 0

for start in range(len(s) - k + 1):

if num % int(s[start:start+k]) == 0:

count += 1

return count

Perl doesn't care (most of the times) about integers vs strings. This makes the code a little more straight forward.

sub main ( $num, $k ) {

my $count = 0;

foreach my $start ( 0 .. length($num) - $k ) {

if ( $num % substr( $num, $start, $k ) == 0 ) {

++$count;

}

}

say $count;

}

Examples

$ ./ch-2.py 240 2

2

$ ./ch-2.py 1020 2

3

$ ./ch-2.py 444 2

0

$ ./ch-2.py 17 2

1

$ ./ch-2.py 123 1

2

Originally published at Perl Weekly 774

Hi there,

I know you all are patiently waiting for the release of Perl v5.44. Recently I joined the mailing list of perl5-porters. You get all the latest update straight in your inbox. This is where I found out, the v5.44 would be out end of next month if everything goes as per the plan. I get excited during this time of the year to see what is new added to the core class feature. I am still waiting for the support of creating roles. I am also aware there are other high priority features in the list. No pressure to Paul Evans and his team, we are happy to see the progress in the language under your guidance.

Well you don't have to wait for the big release to get your hand dirty. For me, I am busy exploring things like Big O Notation, JSON Web Token (JWT), OAuth2, gRPC. There are plenty in the pipelines too.

If you are looking for something new then you should check this out, Aspire in Perl. While going through the post, I came across another gem, OpenTelemetry SDK for Perl, thanks to JJ Atria. It's going in my TODO list. One day, I will take a closer look.

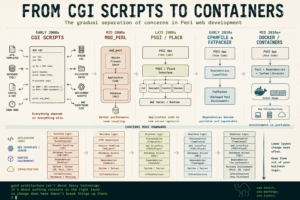

Web development in Perl has seen it all since the CGI Era (1990s). First came PSGI/Plack, the middleware revolution, followed by the rise of frameworks like Catalyst, Dancer2 and Mojolicious. Dave Cross has written a very detailed post about The Long Road From CGI To Containers.

Plenty of posts this week to keep you busy all day, enjoy.

--

Your editor: Mohammad Sajid Anwar.

Announcements

TPRC is about 30 days from now!

Nearly a month from today, TPRC is happening. A quick reminder to everyone about early bird discount. If you are planning to attend TPRC then you must avail the discounts.

Articles

This week in PSC (225) | 2026-05-18

Announcement of 5.43.11 dev release is the hightlight of this week update.

The Camel Cometh

Find out the story behind the support of Perl in Aspire. It is very inspiring and honest story. I wouldn't say, I understood everything but it created an interest inside me. You must checkout yourself.

The Long Road from CGI to Containers

What a journey CGI had. The post reminds me some of my past encounters with CGI, like printing html tags inside the script. Although, it is not recommeded these days in raw form for web development, I am still one of the maintainers of CGI::Simple.

Big O Notation

This story is for all non-computer science background techie who have yet to crack the mystery of time/space complexity. I really enjoyed the journey and I am sure you will love it too.

JWT with Dancer2

JSON Web Token (JWT) is something that came up in discussion many times in the past but I never had chance to look at it in details. Last week finally I found time to get to the bottom of it. This post details how and what is it actually.

OAuth2 with Dancer2

Now OAuth2 is nothing new to many techie but I stayed away from it for so long. I found it too complex topic and kept delaying to explore. Now that I have seen it inside out, it seems like a walk in a park.

Strategy Design Pattern

Every time I talk about design patterns, I get excited like a little boy who found his favourite toy. Whenever I feel down, I pick up my book, Design Patterns in Modern Perl and read a design pattern. My all time favourite is Singleton design pattern. How about yours?

REST vs gRPC

gRPC was something I wanted to explore for a long time. In this post, I shared my investigation so far. I know, I have only scratched the surface and there are still plenty to explore.

CPAN

App::Test::Generator v0.39

Plenty of bug fixes and enhancements in this release as shared by Nigel. The Changes file list the details.

Grants

Maintaining Perl 5 Core (Dave Mitchell): April 2026

PEVANS Core Perl 5: Grant Report for April 2026

I noticed the mention of optional chaining PPC in the report. If it is what I think it is then I am super happy that it is in safe hand now. I can't wait to see the end result. Thank you Paul.

Maintaining Perl (Tony Cook) April 2026

The Weekly Challenge

The Weekly Challenge by Mohammad Sajid Anwar will help you step out of your comfort-zone. You can even win prize money of $50 by participating in the weekly challenge. We pick one champion at the end of the month from among all of the contributors during the month, thanks to the sponsor Marc Perry.

The Weekly Challenge - 375

Welcome to a new week with a couple of fun tasks "Single Common Word" and "Find K-Beauty". If you are new to the weekly challenge then why not join us and have fun every week. For more information, please read the FAQ.

RECAP - The Weekly Challenge - 374

Enjoy a quick recap of last week's contributions by Team PWC dealing with the "Count Vowel" and "Largest Same-digits Number" tasks in Perl and Raku. You will find plenty of solutions to keep you busy.

Largest Vowel

This is an excellent post describing a different way of using Raku. It demonstrates the effectiveness of Raku by utilising such features as 'gather/take' and powerful sequence grouping to make complex string processing simpler than ever before. In addition to being a great way to learn about Raku, this post is also an opportunity for developers to see how the author has reused existing code to produce a clean, modular program.

All Vowels Are Equal

The post demonstrates an extremely sophisticated work to the challenge. It uses advanced Perl's regex features, look-ahead assertions and the (?{...}) code block.

The Weekly Challenge 374

The code provided is a highly legible and clear way of handling the challenge resolution process, making use of commonly used data structures to simplify complex string processing tasks. It is very pleasing to see this clean, parallelised logic presented in both Perl and Python, which helps improve the maintainability of the two codes and provides a great deal of educational value as well.

Perl Weekly Challenge 374

This fantastic example on how to reduce large scale problems into highly efficient solutions using half-lines of codes, showcases Perl’s ability to allow us to write complex, yet very succinct, solutions of difficult problems. It also serves as a great example of how to use regex backreference in a creative way, using regular expressions to locate and manage repeating patterns while minimising the amount of code required.

Hey, won’t you play…

This post showcases some great, multi-lingual solutions to this week's challenge by providing clean and idiomatic code examples in Perl, Raku, Python, and Elixir. Packy explains the thought process and the differences between lookaheads in regular expressions. This makes the article a great teaching tool for discovering how various languages solve equivalent algorithm problems.

Lots of repetition

Peter provides a well-reasoned and pragmatic assessment of the problem at hand and presents readable, non-complex code. He broke up all vowel characters in the string into separate chunks prior to applying a sub-substring check which provides an excellent example of performance optimisation by successfully processing very long strings with a relatively low number of vowels in that string to produce an acceptable processing time.

The Weekly Challenge - 374: Count Vowel

This post takes an insightful and interesting view of the vowel-counting challenge by making an interesting analogy of programming logic and music theory from the 18th century. The "Prefix/Cell/Suffix" model is a unique and clear technical explanation of the 'use of bitmasks for tracking vowel completion', making the bitmask solution to this challenge feel elegant and well thought out.

The Weekly Challenge - 374: Largest Same-digits Number

In this post, you'll find a brief yet highly efficient method of solving the "Largest Same-digits Number" challenge. The method relies on a single-pass regular expression as an elegant means to perform pattern matching with maximum effectiveness, and exemplifies good understanding of the high performance features of Perl.

The Largest Count

The post show how to solve problems using both Rust and Perl languages. It also demonstrates how one can take advantage of the strengths of each language (type-safe collections in Rust vs concise string searches in Perl) to carry out the tasks, but do so efficiently.

Vowels and numbers

The post explains the need to clearly specify the task requirements for the "Largest Same-digit Number" challenge. It shows that an ambiguous requirement has caused different solutions to develop and serves to remind us that "coding to the examples" can be just as important as "coding to the original problem description."

Rakudo

2026.20 Slangification

Weekly collections

NICEPERL's lists

Great CPAN modules released last week.

Events

Toronto.pm - online - JSON Schema: language-agnostic typing / May TPM meeting

May 29, 2026

Exploring Perl Modules + using AI

June 3, 2026

Boston Perl Mongers virtual monthly

June 9, 2026

The Perl and Raku Conference 2026

June 26-29, 2026, Greenville, SC, USA

You joined the Perl Weekly to get weekly e-mails about the Perl programming language and related topics.

Want to see more? See the archives of all the issues.

Not yet subscribed to the newsletter? Join us free of charge!

(C) Copyright Gabor Szabo

The articles are copyright the respective authors.

-

App::FatPacker::Simple - only fatpack a script

- Version: v1.0.2 on 2026-05-22, with 12 votes

- Previous CPAN version: v1.0.1 was released the same day

- Author: SKAJI

-

App::Netdisco - An open source web-based network management tool.

- Version: 2.099000 on 2026-05-20, with 868 votes

- Previous CPAN version: 2.098005 was released 10 days before

- Author: OLIVER

-

Archive::Tar - Manipulates TAR archives

- Version: 3.08 on 2026-05-22, with 16 votes

- Previous CPAN version: 3.06 was released 12 days before

- Author: BINGOS

-

Attean - A Semantic Web Framework

- Version: 0.038 on 2026-05-20, with 19 votes

- Previous CPAN version: 0.037 was released the same day

- Author: GWILLIAMS

-

Cache::FastMmap - Uses an mmap'ed file to act as a shared memory interprocess cache

- Version: 1.62 on 2026-05-18, with 25 votes

- Previous CPAN version: 1.61 was released 17 days before

- Author: ROBM

-

Catalyst::Plugin::Authentication - Infrastructure plugin for the Catalyst authentication framework.

- Version: 0.10026 on 2026-05-21, with 12 votes

- Previous CPAN version: 0.10_025 was released 1 day before

- Author: ETHER

-

CPANSA::DB - the CPAN Security Advisory data as a Perl data structure, mostly for CPAN::Audit

- Version: 20260517.001 on 2026-05-17, with 25 votes

- Previous CPAN version: 20260503.001 was released 12 days before

- Author: BRIANDFOY

-

HTML::Parser - HTML parser class

- Version: 3.85 on 2026-05-19, with 51 votes

- Previous CPAN version: 3.84 was released the same day

- Author: OALDERS

-

HTTP::Daemon - A simple http server class

- Version: 6.17 on 2026-05-19, with 13 votes

- Previous CPAN version: 6.16 was released 3 years, 2 months, 23 days before

- Author: OALDERS

-

HTTP::Tiny - A small, simple, correct HTTP/1.1 client

- Version: 0.094 on 2026-05-17, with 116 votes

- Previous CPAN version: 0.093 was released 5 days before

- Author: HAARG

-

IO::Compress - IO Interface to compressed data files/buffers

- Version: 2.220 on 2026-05-16, with 20 votes

- Previous CPAN version: 2.219 was released 2 months, 7 days before

- Author: PMQS

-

Minion - Job queue

- Version: 12.0 on 2026-05-18, with 236 votes

- Previous CPAN version: 11.0 was released 8 months, 26 days before

- Author: SRI

-

Role::Tiny - Roles: a nouvelle cuisine portion size slice of Moose

- Version: 2.002005 on 2026-05-17, with 72 votes

- Previous CPAN version: 2.002004 was released 5 years, 3 months, 24 days before

- Author: HAARG

-

Sereal - Fast, compact, powerful binary (de-)serialization

- Version: 5.006 on 2026-05-20, with 65 votes

- Previous CPAN version: 5.005 was released the same day

- Author: YVES

-

Sereal::Decoder - Fast, compact, powerful binary deserialization

- Version: 5.006 on 2026-05-20, with 26 votes

- Previous CPAN version: 5.005 was released the same day

- Author: YVES

-

Sereal::Encoder - Fast, compact, powerful binary serialization

- Version: 5.006 on 2026-05-20, with 25 votes

- Previous CPAN version: 5.005 was released the same day

- Author: YVES

-

Template::Toolkit - comprehensive template processing system

- Version: 3.103 on 2026-05-21, with 149 votes

- Previous CPAN version: 3.102 was released 1 year, 10 months, 29 days before

- Author: TODDR

-

WWW::Mechanize::Chrome - automate the Chrome browser

- Version: 0.77 on 2026-05-17, with 22 votes

- Previous CPAN version: 0.76 was released 2 months, 4 days before

- Author: CORION

-

XML::LibXML - Interface to Gnome libxml2 xml parsing and DOM library

- Version: 2.0213 on 2026-05-21, with 103 votes

- Previous CPAN version: 2.0212 was released 1 day before

- Author: TODDR

A few years ago I wrote some Perl code using JSON. It still worked in SLES15 SP6, but in SLES15 SP7 I get an error like this:

Modification of a read-only value attempted at (eval 73)[/usr/lib/perl5/vendor_perl/5.26.1/JSON.pm:287] line 63.

The code in question is:

use JSON -support_by_pp;

#...

use constant JSON_INDENT => JSON->new(); # indenting JSONizer

JSON_INDENT->utf8(1);

JSON_INDENT->indent(1);

JSON_INDENT->indent_length(0);

If I comment the last line, then the error does not occur.

(I use the constant in code like print JSON_INDENT->pretty->encode($JSON), "\n")

For the SP upgrade I noticed these related changes:

Package perl-JSON-XS-4.40.0-bp157.2.3.1.x86_64 was added, and package perl-Cpanel-JSON-XS was upgraded from 4.37-bp156.2.3.1 to 4.380.0-150700.3.3.1 (both x86_64).

What is the problem, and how to resolve it?

The package code in question is:

sub _load_xs {

my ($module, $opt) = @_;

__load_xs($module, $opt) or return;

my $data = join("", <DATA>); # this code is from Jcode 2.xx.

close(DATA);

eval $data;

JSON::Backend::XS->init($module);

return 1;

};

Line 287 is eval $data;.

Just as a very small example, lets say I have the following:

s/foo//g

and I run with it perl -p and the following input:

foo foo foo

foo bar

bar bar

foo bar bar

foo

This would output:

bar

bar bar

bar bar

The lines that only consisted of foo are now blank (aside from the spaces that were between them, if any). For these lines, I would like to eliminate them, but leave any originally blank lines alone.

One way I thought to do this would be to replace foo with some specially recognized string instead, say X, and then any line only consisting of Xs gets deleted, but choosing the right string (instead of X) would be a challenge for general input. Also, then it goes in two passes, which is less fun.

It is just over 30 days before the start of The Perl and Raku Conference. Even if you are a procrastinator, it’s time to make your plans to attend!

Early bird pricing for registration will end on May 28. Special conference pricing for our block of hotel rooms also expires on May 28. So it is definitely time to get yourself registered and to get your room reserved.

Speakers have been notified, and the schedule is being built.

Go to https://tprc.us for all the information about how to register, how to reserve your room, and to see the schedule once it is posted.

When you are making your travel plans, consider the following: On Monday, June 29, we are offering a class: Teaching AI New Tricks: Perly MCP’s for Claude. This class is described at https://tprc.us. Registration for the class is a separate ticket. Check out the details on our website!

Also, on Friday evening, June 26 after our first day of talks, we are offering our version of “Pizza Planet”. We will provide a pizza supper, followed by transportation to the Roper Mountain Science Center and Planetarium show, and an opportunity to visit the Daniel Observatory. This experience is included as part of your conference fee, so come join the fun! You are guaranteed a ticket if you register by May 28!

The conference planning team needs to know if you are coming so we can make sure and have everything ready for you (t-shirt, lunches, name badge, planetarium tickets, etc). Please register today!

TL;DR

Twenty years of desktop environments — WindowMaker, KDE3, fvwm, i3 — and the lesson is always the same: consistency beats aesthetics. This is how I took one vim colorscheme (iceberg) and spread it across my entire desktop: terminal, prompt, window manager, launcher, screensaver, and even git. One palette aligns the whole UI. The only constraint you lay on yourself: timebox it. Because theming takes a fuck-ton of time.

Iceberg ahead

I’m no ricer by any means. But I can tell you my process. Nothing is planned, but everything I did, I did with a masterplan.

Weekly Challenge 374

Each week Mohammad S. Anwar sends out The Weekly Challenge, a chance for all of us to come up with solutions to two weekly tasks. My solutions are written in Python first, and then converted to Perl. Unless otherwise stated, Copilot (and other AI tools) have NOT been used to generate the solution. It's a great way for us all to practice some coding.

Task 1: Count Vowel

Task

You are given a string.

Write a script to return all possible vowel substrings in the given string. A vowel substring is a substring that only consists of vowels and has all five vowels present in it.

My solution

It seems many Team PWC users sent Mohammad "so many emails" when the examples did not match the expected output. As I follow TDD when completing the challenges, I also picked this up.

For this task, I have two loops to generate all possible substrings. The variable start goes from 0 to 5 less than the length of the string. This is because a valid answer needs to have at least five letters. The variable end goes from start + 5 to the length of the string.

I then check if the substr contains each vowel and does not contain any non-vowels. If it does, I add it to the solution list.

def count_vowel(input_string: str) -> list[str]:

l = len(input_string)

vowels = ["a", "e", "i", "o", "u"]

solution = []

for start in range(l - 4):

for end in range(start+5, l+1):

substr = input_string[start:end]

if (

all(v in substr for v in vowels) and

not any(c not in vowels for c in substr)

):

solution.append(substr)

return solution

The Perl logic uses a variable length instead of end to reflect how the substr function works.

use List::Util 'any';

sub main ($input_string) {

my $l = length($input_string);

my @vowels = (qw/a e i o u/);

my @solution = ();

foreach my $start ( 0 .. $l - 4 ) {

foreach my $length ( 5 .. $l - $start ) {

my $substr = substr( $input_string, $start, $length );

if ( $substr !~ /^[aeiou]+$/ ) {

next;

}

if ( any { index( $substr, $_ ) == -1 } @vowels ) {

next;

}

push @solution, $substr;

}

}

say "(" . join( ", ", map { qq{"$_"} } @solution ) . ")";

}

Examples

The order in the output differs from the examples.

$ ./ch-1.py aeiou

("aeiou")

$ ./ch-1.py aaeeeiioouu

("aaeeeiioou", "aaeeeiioouu", "aeeeiioou", "aeeeiioouu")

$ ./ch-1.py aeiouuaxaeiou

("aeiou", "aeiouu", "aeiouua", "eiouua", "aeiou")

$ ./ch-1.py uaeiou

("uaeio", "uaeiou", "aeiou")

$ ./ch-1.py aeioaeioa

()

Task 2: Largest Same-digits Number

Task

You are given a string containing 0-9 digits only.

Write a script to return the largest number with all digits the same in the given string.

My solution

I always look at the examples to fill in answers that aren't mentioned in the task description. One thing I wasn't sure if the numbers had to be consecutive or not. In this instance, the examples don't help answer that question. I made the decision that they didn't need to be.

My first thought was to sort by the frequency of the numbers and the number if it had the same frequency. But this would work with numbers like 100 (where 0 appears most often).

The solution I came up with was to count the frequency of each number using the Counter function. I then loop through the dict (hash in Perl) to find the highest number.

from collections import Counter

import re

def largest_number(input_string) -> int:

if not re.search(r'^\d+$', input_string):

raise ValueError("String should only contain numbers")

largest_number = 0

freq = Counter(input_string)

for digit, count in freq.items():

number = int(digit * count)

if number > largest_number:

largest_number = number

return largest_number

The Perl solution follows the same logic.

Examples

$ ./ch-2.py 6777133339

3333

$ ./ch-2.py 1200034

4

$ ./ch-2.py 44221155

55

$ ./ch-2.py 88888

88888

$ ./ch-2.py 11122233

222

Dave writes:

Last month I continued looking into race conditions in threads and

threads::shared. I have now reached the point where the threads-related

test suite can run cleanly under valgrind --tool=helgrind. But there

is still an occasional crash in blocks.t when run repeatedly for many

hours in parallel on 20 terminals, which I am currently working on. I'm

hopeful that once that is fixed, the branch will be ready to push.

Summary:

- 33:35 GH #24258 dist/threads/t/free.t: Rare test failure in debugging build on FreeBSD

Total:

- 33:35 (HH:MM)

Paul writes:

April kept me busy lining up PPC0030 in the hope of putting that into 5.45.1, with a couple of other items of interest.

- 7.5 = Continue progress on PPC0030

- https://github.com/Perl/perl5/pull/24304 (draft)

- 1.5 = Assisting veesh with the implementation of the optional chaining PPC

- 1 = Managing various PPC documents

Total: 10 hours

My next focus will be to continue to get these various items ready for

merge at the start of the 5.45 cycle, along with some more class

system features and bugfixes.

Tony writes:

``` [Hours] [Activity] 2026/04/01 Wednesday 0.08 #24335 follow-up 1.18 #24337 try to review 2.03 #24005 work on docs 0.30 #24251 comments 0.12 #24134 re-check and trivial comment

1.35 #24005 more docs

5.06

2026/04/02 Thursday 0.53 ppc/discussion/84 research and comment 0.82 #24308 read updates, try to find clarity with a correction 0.57 #24105 follow-up on #24294 0.58 #24304 review updates 0.75 #24105 research and comment

0.78 #24005 more docs (detailing $^P flags)

4.03

2026/04/07 Tuesday 1.73 #23676 re-work PR, testing, push to update/CI 0.30 #24308 review updates and comment 0.33 #13626 research and comment 0.08 #24281 review update and approve 0.52 #17077 research and comment

0.52 #23995 research, testing and comment

3.48

2026/04/08 Wednesday 0.08 #24308 review discussion and approve 0.37 #23995 research and testing, comment 1.98 #24005 more docs

1.52 #24005 more docs

3.95

2026/04/09 Thursday 0.43 #24349 review and comment 1.22 #24105 review, research 1.53 #24005 more docs, testing

1.38 #24005 more docs, testing

4.56

2026/04/13 Monday 0.30 github notifications 0.25 #24187 review discussion 0.17 #24349 review and briefly comment 0.65 #24361 review, research and comments 0.42 #24362 review, research and comment 0.20 #23660 comment 1.13 #24005 add more sample code

0.73 #24005 checking

3.85

2026/04/14 Tuesday 0.63 #24361 review updates, research and comment 0.08 #24335 base issue now a blocker, re-request review for updates 0.25 #24349 review updates and approve 0.98 #24337 review, note decision to revert, look into the still failing issues 0.22 #24366 review, research and approve

0.43 #24364 review, comment and approve

2.59

2026/04/15 Wednesday 0.23 github notifications

2.12 #23676 fix some issues in the PR, testing and git wizardry

2.35

2026/04/16 Thursday 1.17 #24370 comments 0.20 #24369 review and approve 0.50 #24360 review, review discussion and approve 0.20 #24357 review and approve 0.07 #23676/24335 apply to blead 0.58 #24299 try to review, minor issues, need more thought for the actual fix 0.20 #24343 review and approve

0.05 #24299 briefly comment

2.97

2026/04/20 Monday 0.17 github notifications 0.08 #24105 comment 0.83 #24304 review updates and comments 0.12 #24251 review updates and approve 1.48 #10346 rewrite, testing, make PR 24381 0.73 #24299 review

1.12 #24999 review, comment with conditional approval

4.53

2026/04/21 Tuesday 0.12 github notifications 0.20 ppc #81 review 0.72 #24378 review and comment 0.18 #24356 review - seems incomplete 0.15 #24356 follow-up 2.58 #24005 recheck, minor fixes and a more significant fix, work on moving debugging regular expressions to another

pod

3.95

2026/04/22 Wednesday 0.28 #24361 review discussion and comment 0.40 #24304 review updates and comments 0.22 #24378 more comment 0.37 #24005 commit clean up 0.68 #24356 try to get this to generate new macros 0.63 #24356 got it working, testing and comment

0.55 #24005 review perldebguts

3.13

2026/04/23 Thursday 0.13 #24361 review latest and comment 2.25 #24387 review, testing, debugging and comments 0.57 review failing smoke reports - try to reproduce SelfLoader failures 1.35 debugging SelfLoader - handle duplication error?

sysseek(.. tell()...) seems suspect

4.30

2026/04/28 Tuesday 0.58 #24378 review updates and approve 0.40 #24387 review updates and approve 0.32 #24304 review updates and comment 0.52 #24390 review, check build logs, testing and comment

1.42 SeflLoader - research glibc source, debugging, testing

3.24

2026/04/29 Wednesday 1.55 #24396 review, research and comment 0.20 #24394 review and approve 1.98 SelfLoader research- possible causes, history, work on

fix, long commit message, more testing, push for smoke-me

3.73

2026/04/30 Thursday 0.52 #24397 review, comment and approve 1.77 #24389 review, research and approve

0.13 #24388 review and approve

2.42

Which I calculate is 58.14 hours.

Approximately 38 tickets were reviewed or worked on. ```

One of the defining characteristics of a good programmer is an instinct for keeping implementation details in the correct layer of an application.

That sounds abstract, but it turns out to explain a huge amount of the progress we’ve made in software development over the last twenty-five years.

And nowhere is that clearer than in Perl web development.

Many of us who built web applications during the dotcom boom spent years learning this lesson the hard way.

We wrote CGI programs that:

- parsed HTTP requests

- generated HTML by hand

- connected directly to databases

- embedded SQL inline

- mixed business logic with presentation

- relied on Apache behaviour

- assumed specific filesystem layouts

- and often only worked on one particular server configuration

It all worked. Until it didn’t.

The history of Perl web development is, in many ways, the history of gradually moving implementation details into more appropriate architectural layers.

The Early CGI Years

Early Perl CGI applications were often a single giant script.

You’d open a file and see:

- request handling

- authentication

- HTML generation

- SQL queries

- business logic

- configuration

- deployment assumptions

…all mixed together in a glorious ball of mud.

Something like this:

#!/usr/bin/perl

use CGI;

use DBI;

my $cgi = CGI->new;

print $cgi->header;

print "<html><body>";

my $dbh = DBI->connect(

"dbi:mysql:test",

"user",

"pass"

);

my $sth = $dbh->prepare(

"select * from users where id = ?"

);

$sth->execute($cgi->param('id'));

while (my $row = $sth->fetchrow_hashref) {

print "<h1>$row->{name}</h1>";

}

print "</body></html>";At the time, this felt perfectly normal.

And to be fair, it was a huge step forward from static HTML sites.

But the design had a fundamental problem:

Everything knew too much about everything else.

The application logic knew:

- how HTTP worked

- how HTML worked

- how Apache launched CGI scripts

- how the database worked

- how the operating system was configured

Every concern leaked into every other concern.

That made systems:

- hard to test

- hard to reuse

- hard to deploy

- hard to scale

- and terrifying to change

The First Big Lesson: Put Logic in Libraries

One of the first signs of a developer maturing is the realisation that application logic should live in reusable modules, not in front-end scripts.

Instead of this:

if ($user->{status} eq 'gold') {

$discount = 0.2;